Choosing Multiuser Jupyter Platform - Sagemaker vs. JupyterHub vs. Vertex AI

Are you thinking about how to set up a Jupyter platform for your team?

Lightweight notebook services like Google Colab allow you to run notebooks on small datasets with limited functionality. While these kinds of managed notebook services are useful for small analysis tasks, they are not suited to a production data science or machine learning workflow because they:

- have limited compute and memory,

- don’t integrate with other software development tools like version control,

- don’t have role-based access control, and

- don’t work well on large datasets as you do not have access to a long running VM or a persistent disk.

Your professional data science or machine learning team needs a more powerful Jupyter platform, one that is scalable, integrated with your data pipelines, and includes access control. Here we take a look at three enterprise notebook options, ranging from an entirely self-managed JupyterHub to fully vendor-managed cloud solutions like AWS Sagemaker & GCP Vertex AI.

Do it Yourself (JupyterHub)

Doing it yourself can mean anything from running Jupyter locally on bare-metal servers to using cloud-based Kubernetes engines. In some contexts, bare-metal is the right solution: If you work with large scientific datasets that are stored on local infrastructure that has custom software to access it, hosting Jupyter locally may be your best option. Alternatively, if you are willing to host Jupyter in the cloud but you want full control over the configuration, or if you want to ensure that your solution is cloud-agnostic, setting up your own Jupyter installation on cloud infrastructure may be right for you.

JupyterHub provides a multi-user platform for Jupyter that your team members can log in to and run notebooks on. There are two JupyterHub distribution options: The Littlest JupyterHub for small-scale JupyterHub instances and a Kubernetes-based deployment for larger-scale deployments with a hundred or more users. Both options can be used with the most common cloud providers or as bare-metal installations.

One concern you may have about self-hosting JupyterHub is security: You will need to set up HTTPS and authentication (JupyterHub uses PAM authentication by default). JupyterHub supports integrating with any OAuth identity providers such as GitHub, GitLab, and Google and even supports LDAP and Active Directory authentication.

JupyterHub Pros:

- You are not locked into any particular cloud vendor.

- You can monitor costs closely and keep costs down by avoiding the extra costs associated with managed notebook services.

- You have full control over configuration and can add any integrations or add-ons you want.

Cons:

- You will need to do some low-level set up and maintenance, including security and authentication.

- You will need to manage connections to external datasets.

- You will need to set up integrations with version control systems for notebook sharing & collaboration.

Google Vertex AI Workbench

Google’s Jupyter offering is Vertex AI, a suite of machine learning functionality that includes feature stores, training pipelines, model registries, and endpoints, all available within the Google Cloud Platform (GCP). Vertex AI Workbench is the enterprise edition, which can be either user-managed or fully managed. The user-managed Vertex AI Workbench is a simple JupyterLab instance with a choice of kernels. The fully managed option includes extra functionality and integrations.

The fully managed Vertex AI Workbench option offers convenient, built-in integrations with Google Cloud Storage and Google Cloud BigQuery, and is easy to integrate with GitHub, all from within your JupyterLab environment. You can control compute on a per-notebook level and configure automated shutdown for idle instances. The Vertex AI Workbench notebook executor feature allows you to schedule notebook runs and save the output to Google Cloud Storage, where it can be shared.

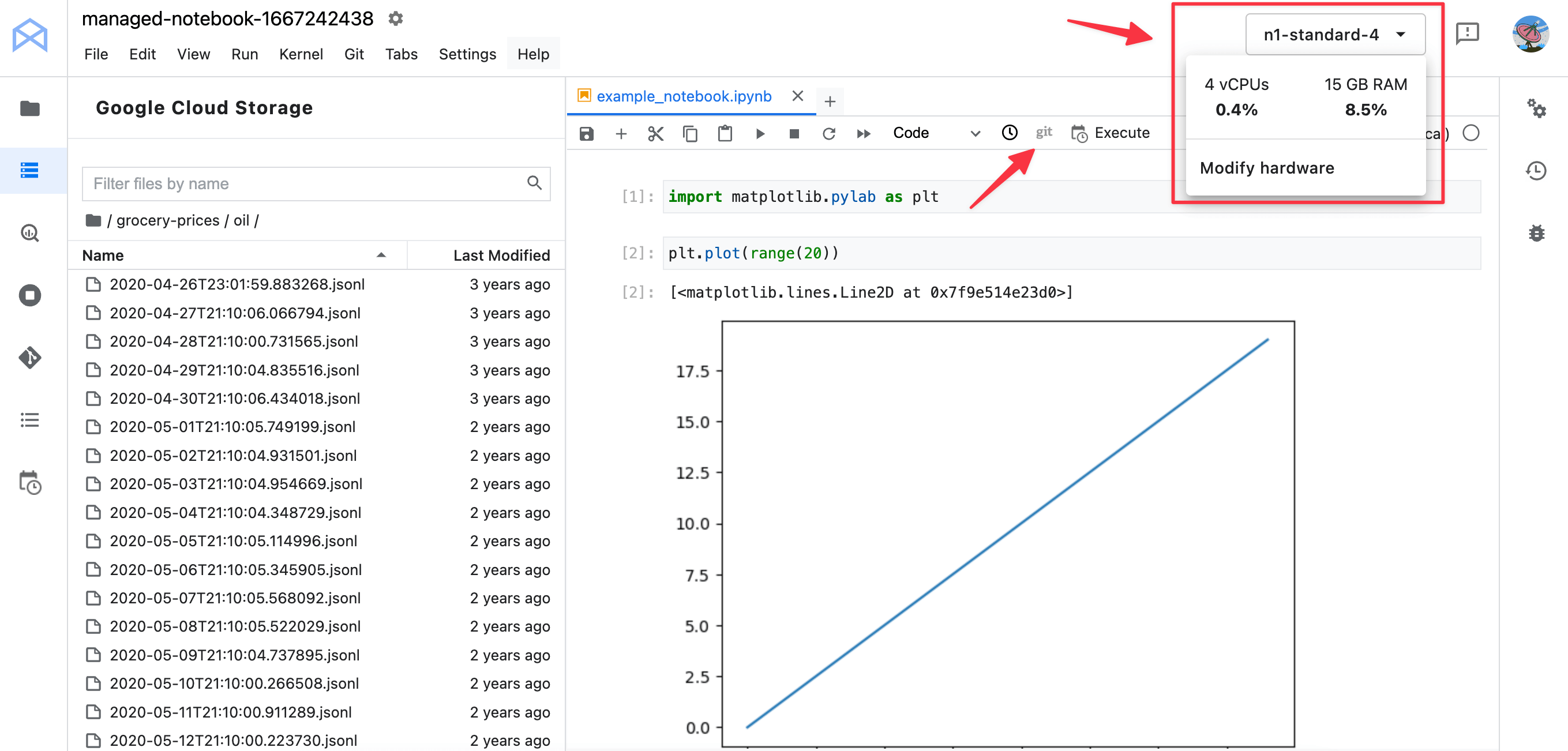

The screenshot below shows a managed notebook instance. Notice the compute details drop down on the top right - where you can modify the compute for your notebook. You can also see the Good Cloud Storage navigation panel on the left, where you can navigate around stored files as if they were local. On the navigator bar at the top of the notebook you can see a git option - if you have set up a GitHub repo, then this button will let you do an nbdiff compare to the current git HEAD. The Execute command allows you to set up an execution schedule for your notebook.

Access to Vertex AI Workbench is managed through GCP with authentication and authorization controls provided as part of the GCP platform. If you want multiple users to access the same Workbench JupyterLab instance, you can set up permissions with a service account that multiple users can be given access to. These users can view and edit the same running notebook.

The cost of using Vertex AI Workbench is the cost of the compute and storage resources your notebooks use, plus management fees. Management fees for the fully managed option are about 10x higher than the user-managed option, and Vertex AI Workbench only supports the more expensive on-demand compute options, not spot compute. However, when you create a Jupyter instance, the Vertex AI Workbench UI provides an estimate of the cost based on the parameters you choose.

Pros:

- Authentication through GCP.

- Lower maintenance overhead.

- Scalable compute, including GPU options.

- With the managed option, integration with GCP storage options and GitHub, and the ability to schedule Notebook runs.

- Notebooks can be shared.

- Cost estimate on creation.

- Automated shutdown of notebooks.

Cons:

- Management fees make it more expensive, with higher costs for the managed option.

- Third-party JupyterLab extensions such as Jupytext are not supported.

Amazon SageMaker

SageMaker is Amazon Web Service’s machine learning product, which has a similar suite of machine learning functionality to Google’s Vertex AI. SageMaker has two Jupyter Notebook products:

- SageMaker Notebook Instance, the more straightforward, cloud-based notebook service.

- SageMaker Studio, a more sophisticated platform that extends JupyterLab.

SageMaker Studio comes with many plug-ins and extensions that allow for easy integration with the rest of the SageMaker suite. The SageMaker Pipelines extension, for example, provides a SageMaker Studio-exclusive UI that allows you to watch your pipeline running. Third-party Jupyter extensions such as Jupytext are included.

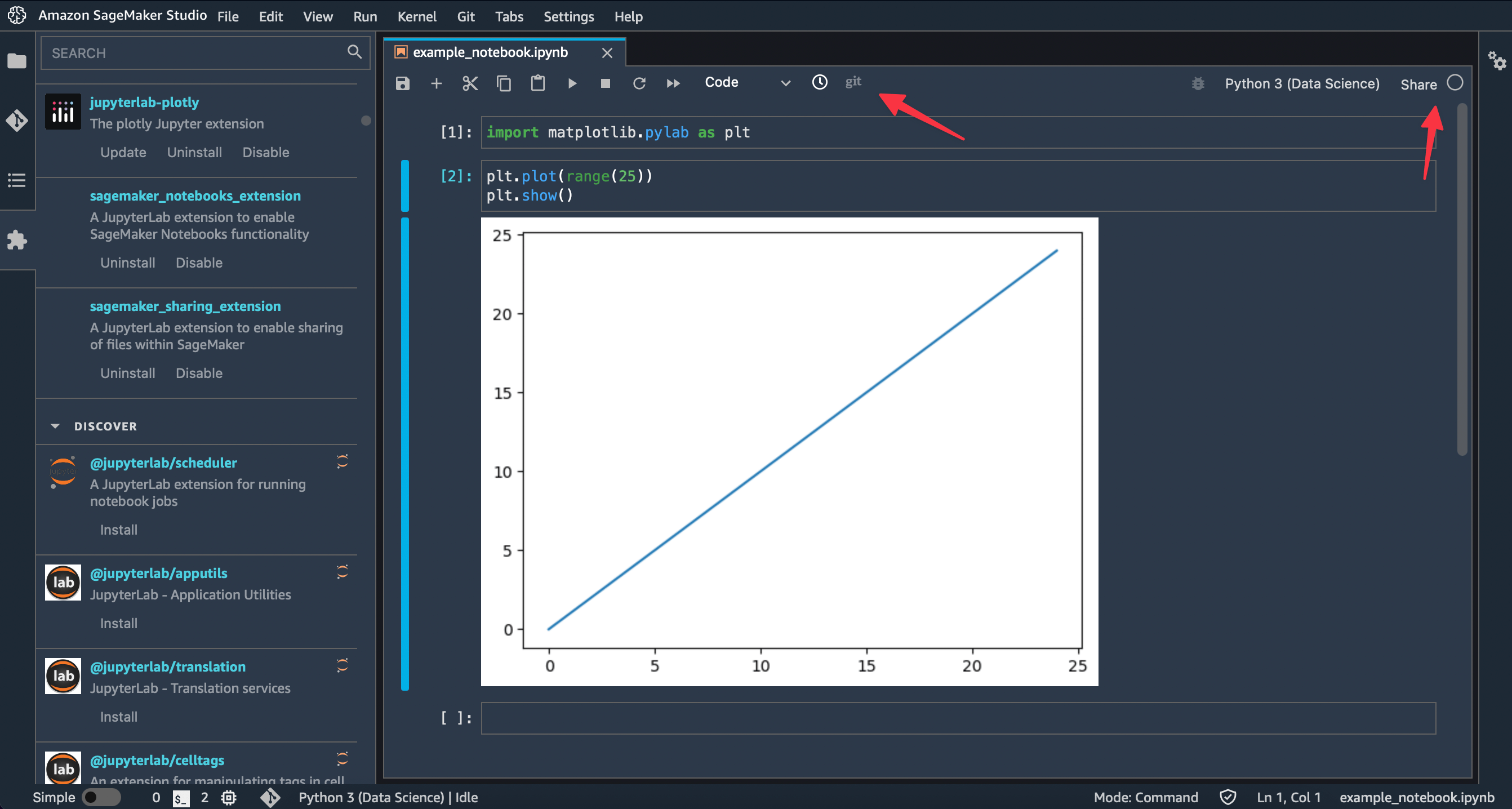

The screenshot below shows some of the plug-in options the SageMaker Studio console offers. Although SageMaker Studio doesn’t offer native notebook scheduling, you can set up scheduling manually by adding the third-party jupyter-scheduler extension.

Both SageMaker Notebook Instance and SageMaker Studio can be accessed via the AWS console, and handle authorization through the AWS IAM authentication mechanisms. SageMaker Studio also offers authentication via SSO, so that users can log in to SageMaker Studio without having to go through the AWS console.

Both SageMaker options include built-in GitHub integration and can display Git diffs for notebooks against the current Git HEAD. You can see the git toolbar option in the screenshot above.

SageMaker Studio offers scalable compute and the option of using different compute types for different notebooks. Data is stored in elastic file storage, where it can be used by multiple notebooks.

SageMaker Studio notebooks can be shared, but what is shared is a copy of the notebooks. Subsequent changes to the shared notebook won’t be reflected. Which means you do need to use version control system like GitHub for collaboration work.

Pros:

- Authentication through AWS or SSO.

- Lower maintenance overhead.

- Scalable compute, including GPU options.

- Integration with GitHub and built-in support for GitHub diffs.

- Many plug-ins and integrations with both other components in the SageMaker suite and external Jupyter extensions.

Cons:

- Only copies of notebooks can be shared.

- Cost of Sagemaker instances can be 20% to 40% more than an equivalent EC2 instance

- Customising your JupyterLab environment with add-ons & extensions can be tricky

TL;DR

JupyterHub is your best option for running Jupyter Notebooks on bare-metal or if you want your notebooks to be highly configurable and you are comfortable doing your own lower-level set up and maintenance. This option gives you full control over cost & configuration & avoids vendor lock-in.

If you would prefer not to deal with the details of installation and maintenance or you want to make use of other components of cloud machine learning platforms, both Google Vertex AI and Amazon SageMaker are good options. While Vertex AI and SageMaker have similar overall functionality, Vertex AI wins on the side of notebook sharing and built-in notebook scheduling and SageMaker wins on its integrations with the rest of the SageMaker suite and third-party Jupyter extensions.

Cost-wise, the pricing will depend on your setup. It is easy to end up with an unexpectedly high bill if you use large compute resources, so it’s worth monitoring your spend closely when you first set things up. When you create a Workbench notebook instance, the GCP UI will give you an estimate of cost. Sagemaker doesn’t provide a cost estimate on set up, but does provide a general cost calculator.

The table below shows a rough comparison of the cost to setup a notebook instance:

| Notebook Instance | RAM | vCPU | Persistent Disk Size | Cost |

|---|---|---|---|---|

| JupyterHub (Amazon EC2) | 16GB | 4 | 100GB | $71 |

| Google Workbench | 15GB | 4 | 100GB | $202 |

| Sagemaker Studio | 16GB | 4 | - | $165 |

If you found this article useful, checkout ReviewNB, it helps you with code reviews & collaboration on Jupyter Notebooks.